It all started with me wanting to analyze the color of some out-of-calibration projectors with potentially aged bulbs in order to see if I could create a “poorly calibrated projector” LUT to more closely examine the effects of poor projector quality on a graded image. Why is a tale for another time; suffice it to say, it’s a research project.

I have a Klein K-10 Colorimeter which I was originally intending to use for the project, but while discussing my plan with Bram Desmet at Flanders Scientific, who’s an extremely knowledgable fellow when it comes to display calibration, he pointed out that a Colorimeter would be unsuitable for my purposes since the potentially aged bulbs of the projectors that I needed to measure would have an unknown spectral distribution, and Colorimeters assume a known spectral distribution for any given device (which is supplied as a profile for each device).

Crap.

Turns out I needed to use a Spectroradiometer, which is another device for measuring color, that directly measures the short, medium, and long wavelengths of light that we see as color – making it able to accurately measure the spectral distribution of any light source without any other information.

I’ve avoided Spectroradiometers up until now because (a) they’ve traditionally been pretty expensive, and (b) like I said, I’ve already got a Colorimeter. However, given some projects on the horizon, it had occurred to me that it might not be a bad thing to bite the bullet and invest in another measurement instrument, not only for its value in future color research, but also because I could then use it to recalibrate my Colorimeter, since all Colorimeters benefit from periodic recalibration to make sure that everything is being measured accurately.

Of course, it turns out that you ALSO need to get the Spectroradiometer periodically calibrated. However, I discovered that I had no idea how Spectroradiometers got calibrated. And I hate not knowing things.

Bram introduced me to Guillermo Keller, President of Colorimetry Research, who graciously invited me to the lab where the Spectroradiometers they make (the CR-250) are calibrated before being shipped out, so I could see the whole process in person.

I’ve written about display calibration before, both on this blog, and in my Color Correction Handbook. In order to do color-critical work such as grading a movie, episodic show, or music video for the public’s enjoyment, it’s essential to have a display capable of outputting accurate, standards-compliant video. Displays are made accurate via a calibration procedure whereby thousands of color patches are displayed on that monitor and measured by a color probe of some kind, either a Colorimeter or Spectroradiometer.

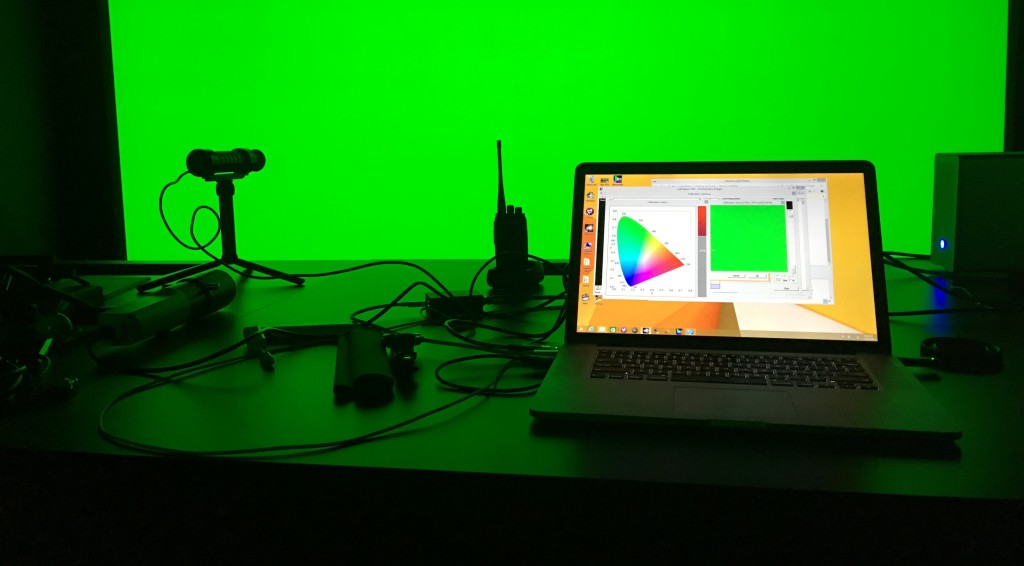

The software that generates the color patches going to the display and simultaneously records measurements made with the probe (applications include Light Illusion’s LightSpace and SpectraCal’s CalMan) then compares the actual color of each patch with the measured color being emitted by your display, and compiles the thousands of measurements being taken into a characterization that describes how that display is really showing color. The calibration software can then mathematically compare a display’s characterization to the desired video standard that display is supposed to be outputting (BT.709, P3, or Rec.2020), and generate a calibration LUT to load back onto the display (or onto a LUT box sending a video signal to the display) that is used to guarantee that display is outputting accurate color across the spectrum according to the appropriate video standard in use.

Display calibration is dependent on the accuracy of your measuring device, and Colorimeters and Spectroradiometers can subtly shift over time, so unfortunately it’s not enough to simply buy an expensive probe and put it on your shelf, you need to have your probe of choice recalibrated over time. Guillermo recommends having both the CR-250 and CR-100 recalibrated once yearly.

Calibration, in fact, is a carefully controlled chain of device measurements. Monitors can be calibrated using Colorimeters. Colorimeters can be calibrated using Spectroradiometers. But how then are Spectroradiometers calibrated?

Very carefully, it turns out. And using equipment that is itself calibrated, extending the chain of calibration all the way back to fundamental components that are manufactured and performance-tracked by companies such as Gooch and Housego, that are themselves compared to light sources that are traceable to devices and methods standardized by NIST, the National Institute of Standards and Technology, an agency of the U.S. Department of Commerce. So, if you’re wondering who, through the long chain of calibration, is ultimately responsible for the color accuracy of every movie, television show, promo, and advertisement you watch, it’s the federal government.

But this is going all the way down the rabbit hole. For the film and video practitioner’s practical purposes, it is the calibration of Spectroradiometers upon which the scaffolding of our industry rests, and there are four fundamental procedures involved with this. Each of these tests rely on taking spectral measurements of a known light source. The accuracy of everything else relies entirely on the maintenance and care taken with these light sources.

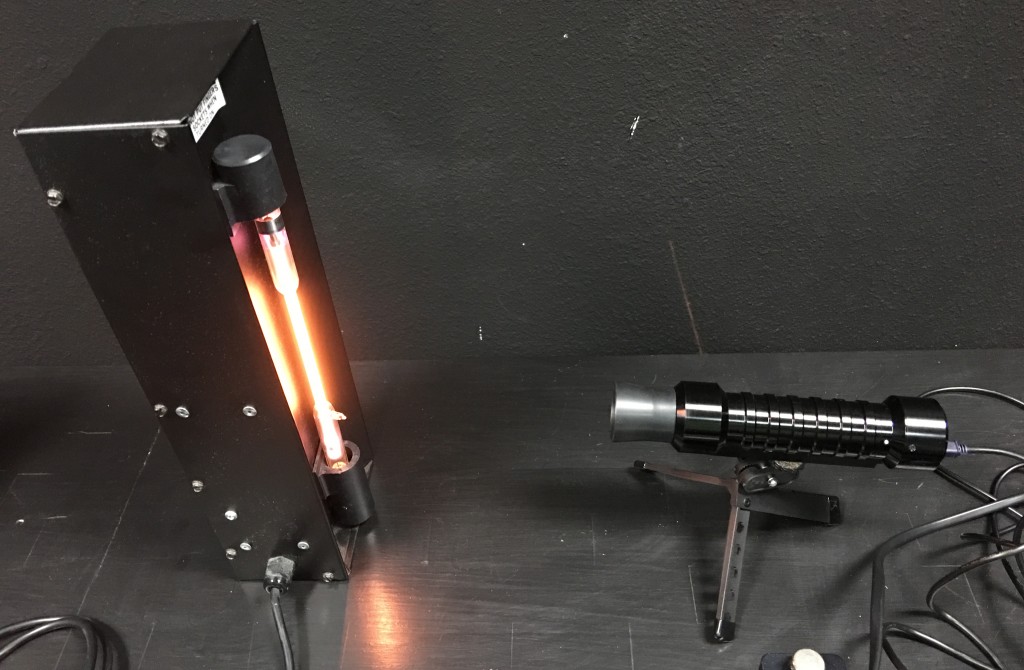

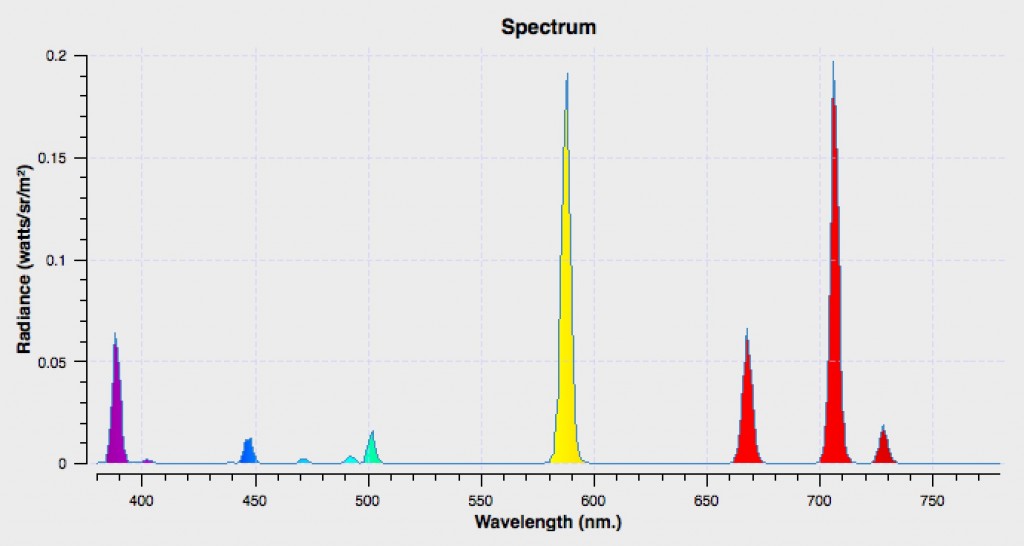

First, a Helium-gas lamp is used to calibrate the Spectroradiometer sensor’s pixel-to-wavelength transformation.

The Helium-gas lamp bulb, which is similar in principle to a Neon sign tube, has a unique and utterly reliable spectral distribution that spikes at specific wavelengths. These spikes are clear to see, do not vary, and provide an easy way to calculate the difference between what the probe is reading, and the reality of physics. This offset is stored on the probe as a transformation.

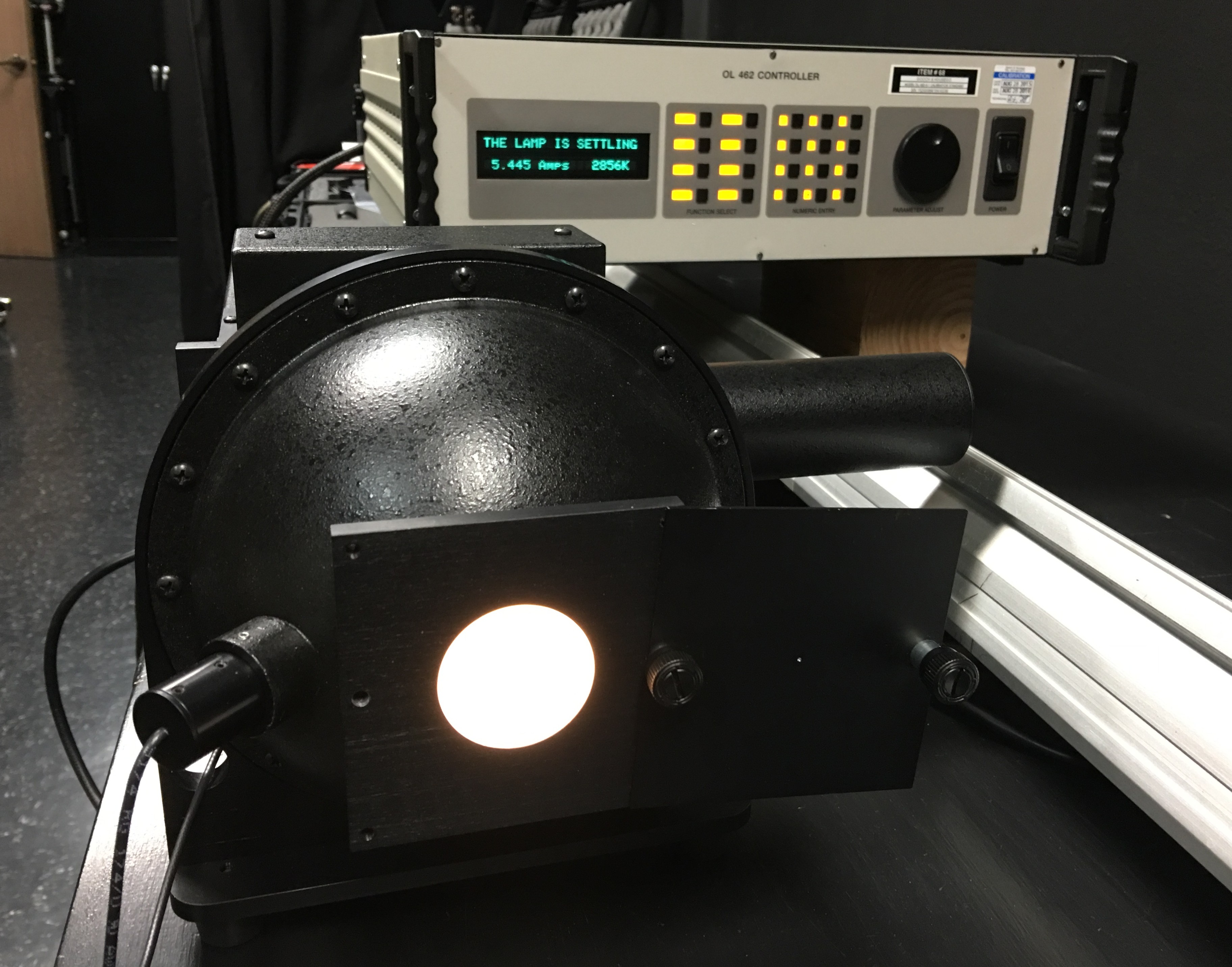

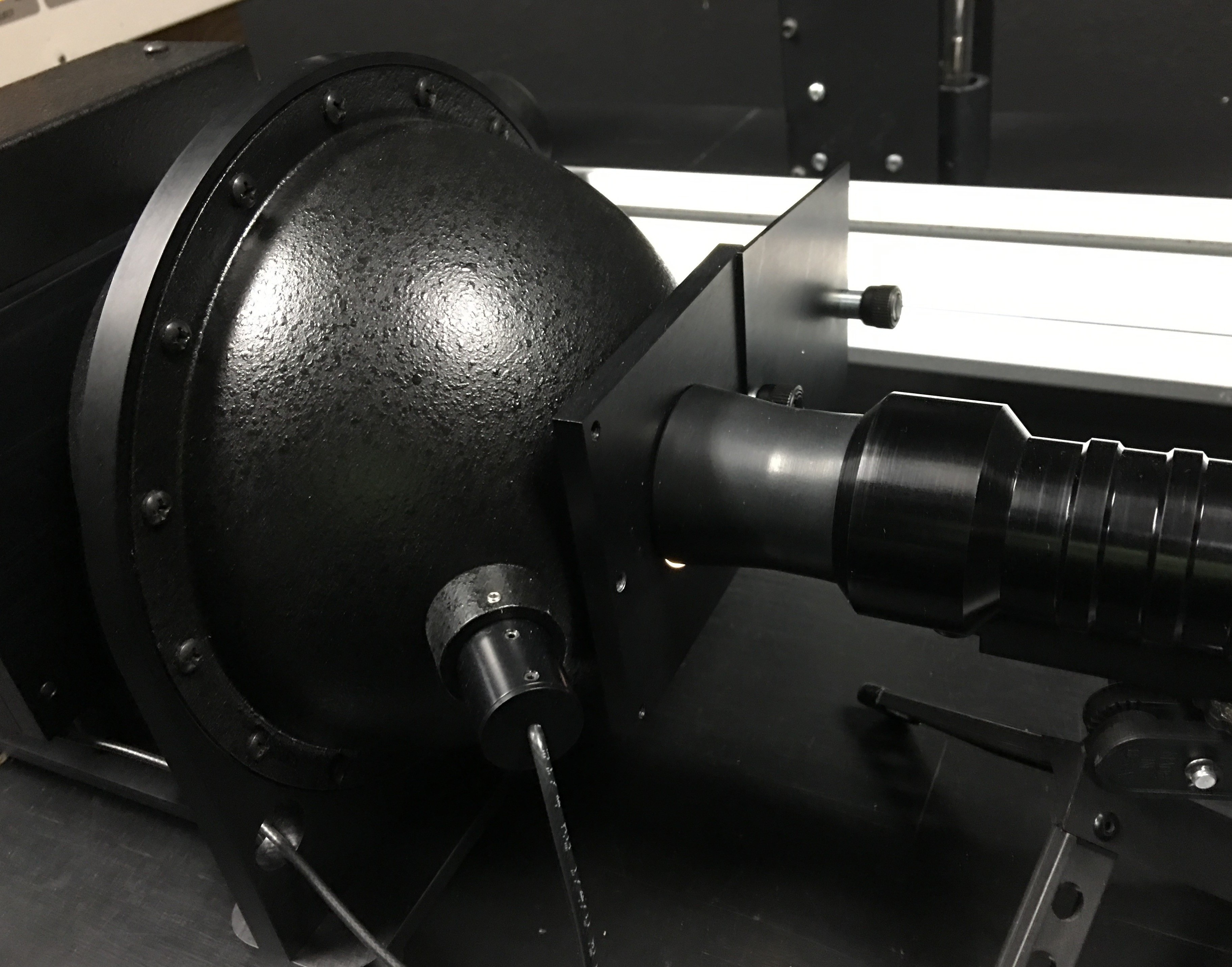

Next, a tungsten light source reflecting diffusely within an integrated sphere is used to calibrate the probe’s reading of spectral distribution.

The integrated sphere is itself calibrated to NIST standards, and the bulb usage is carefully timed and recorded, since the whole sphere is periodically sent in for measurement. In fact, one of the measures taken to extend the life of this device is to only turn it on by slowly increasing the voltage from 0 to full, in order to prevent spikes of voltage causing unnecessary wear to the bulb.

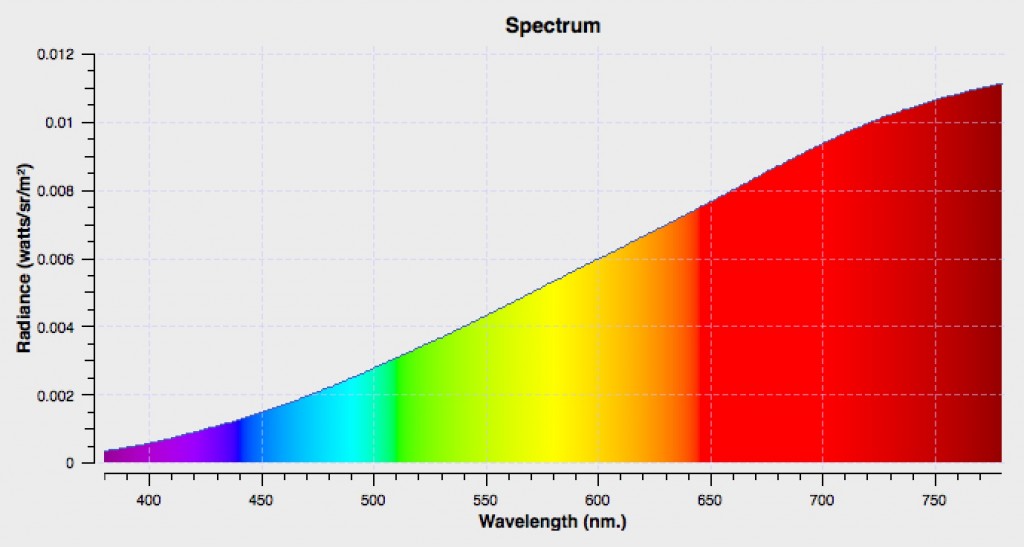

As with the Helium measurement, the difference between the measured spectral radiance in linear pixels (the raw data that is recorded by the probe through the optics) and the known output of the integrated sphere is used to determine the transform from the pixel value recorded by the probe to an accurate reading of spectral radiance. This transform is also stored on the probe.

Lastly, as an alternate step, the quality of the integrated sphere’s output can be verified by measuring the reflectance of a NIST-traceable tungsten bulb (a $1000 200-watt lamp) shining on a similarly NIST-standardized diffuse “reflectance standard” from a specific distance. To highlight how picky these devices are, the bulb must be sent in to be re-measured every 600 minutes, with the new measurements being factored in to subsequent use of that bulb. Meanwhile, the reflectance target, which is comprised of compressed chalk-like particles, must be certified to be close to 100% reflective.

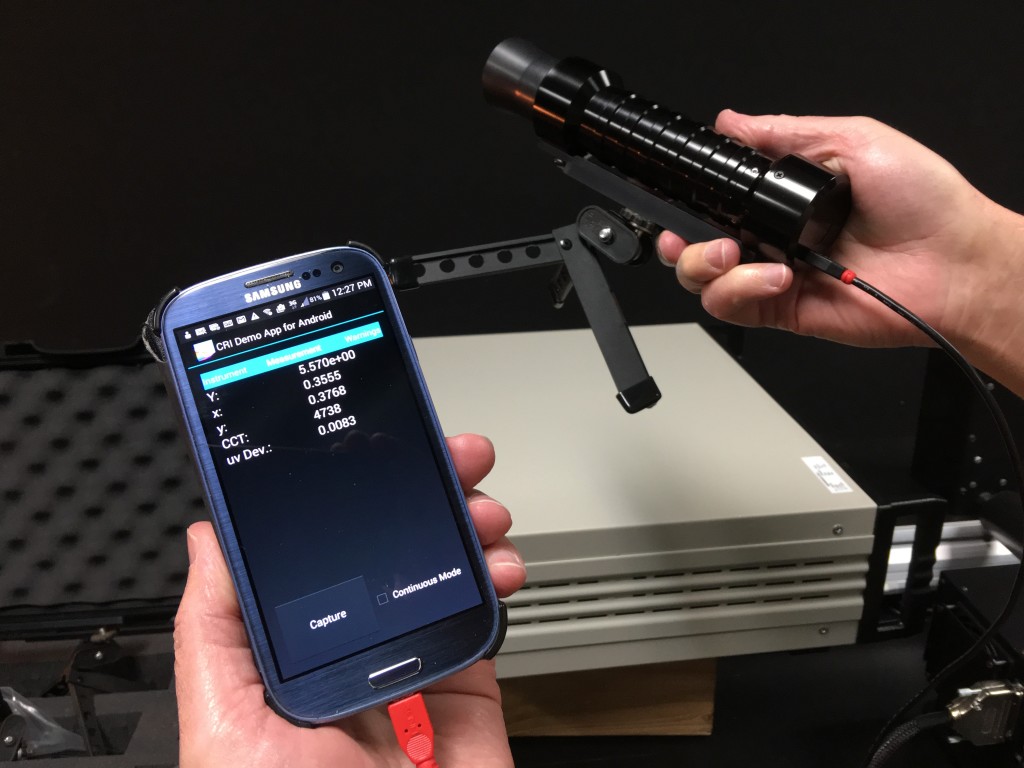

This is only done for spot checking, in order to verify that the integrated sphere is operating correctly. The bulb and reflectance target are mounted a measured distance apart (the intensity of the reflected light is controlled in this way via the inverse square law), with the probe pointed at the target, and another measurement is taken and compared.

Spectroradiometer measuring the NIST traceable bulb reflecting off of the reflectance standard target.

And that’s it. Once each Spectroradiometer has been calibrated in this way with the offsets stored on the probe, they’re shipped out to manufacturers, calibrators, and facility people who in turn use them to calibrate the displays we use in the world of film and video.

In the process of learning how Spectroradiometers are calibrated, I also learned much more about how they actually work, and how they fundamentally differ in operation from Colorimeters. These differences are key to understanding each device’s differing advantages and disadvantages when it comes to you making a choice about what kind of device to use.

Spectroradiometers measure the wavelengths of light directly. Optics are used to gather light through the front lens and focus it through a “diffraction grating,” which is a grooved filter where each groove works as a tiny prism to split the light apart for measurement. In Spectroradiometers, the quality of these optics determine the quality of the instrument, given in nanometers (for example, the CR-250 is a 4 nm probe, which is considered extremely accurate for purposes of video calibration).

The light that’s split apart via the diffraction grating then falls upon the 250-pixel grid of the Spectroradiometer’s CMOS sensor, which is set up to measure the 380 to 780 nanometer range of the spectrum that CIE 1931 specifies as the visible range of light. Because Spectroradiometers measure the spectral distribution of light directly, they need no other information about the source being measured.

However, because of the physics of how they function, Spectroradiometers are slow. The diffraction grating is not efficient at transmitting light; two-thirds of the light coming in through the front lens is lost right off the bat. Then, only 1/250th of the remaining light is measured by each pixel of the probe’s sensor. The only way to compensate for this low sensitivity is to increase the exposure time of light falling onto the sensor. This isn’t a problem when measuring bright colors, but it becomes a significant problem when measuring very dark colors. For example, measuring a 3 candela source requires a 30 second exposure for a Spectroradiometer. This means that they’re slow to operate.

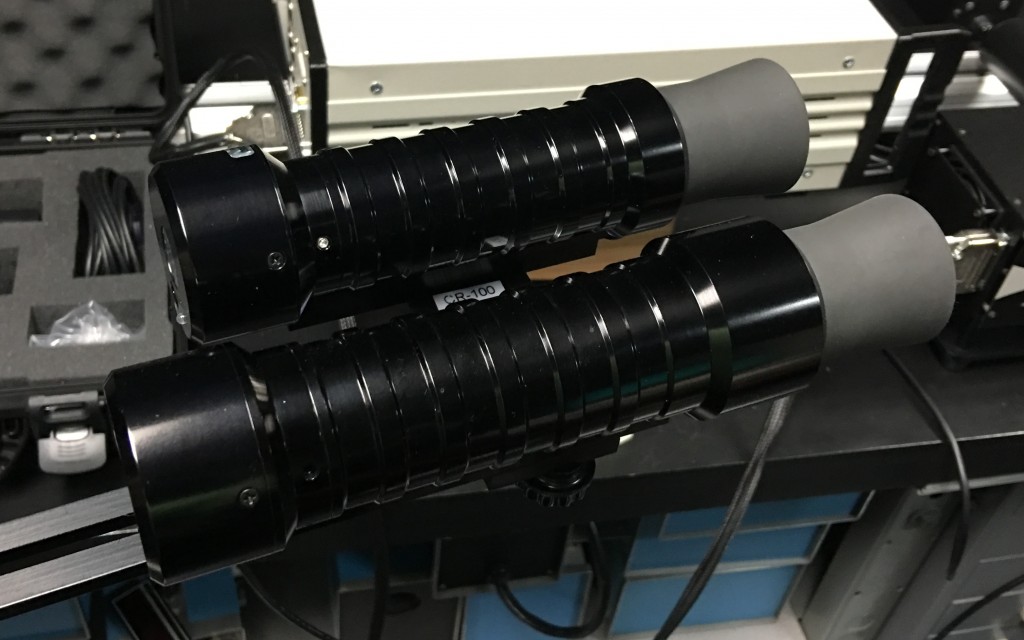

Colorimeters work much differently. Colorimetry Research also makes a Colorimeter, the CR-100, but the principle is the same for colorimeters made by anyone. For the CR-100, light coming through the front lens is split and directed through three colored glass filters, one each for Red, Green, and Blue, with the filtration specified by the CIE 1931 2 degree standard observer spectral response curves, which attempt to model the sensitivity of the cones of human eyes to low, medium, and high wavelengths to light. The output of each filter is then measured, with the quality of the measurement depending entirely on how well the filters match the CIE 1931 standard observer model.

Because the sensors reading the output of the Red, Green, and Blue filters are each receiving one-third of the available light, Colorimeters are extremely fast. The same 3 candela source that takes 30 seconds to be read by a Spectroradiometer only takes 1 millisecond on a Colorimeter. However, the truth is that the speed of Colorimeter readings also depends on the refresh rate of the display device (in Hz), so assuming a display running at 60 Hz, the measurement actually takes 16.6 milliseconds. Either way, this is considerably faster than a Spectroradiometer.

And this increased sensitivity means that Colorimeters are also better at measuring extremely dark colors, with the CR-100 capable of taking accurate color measurements all the way down to .03 cd/m2, and accurate luminance measurements all the way down to .003 cd/m2.

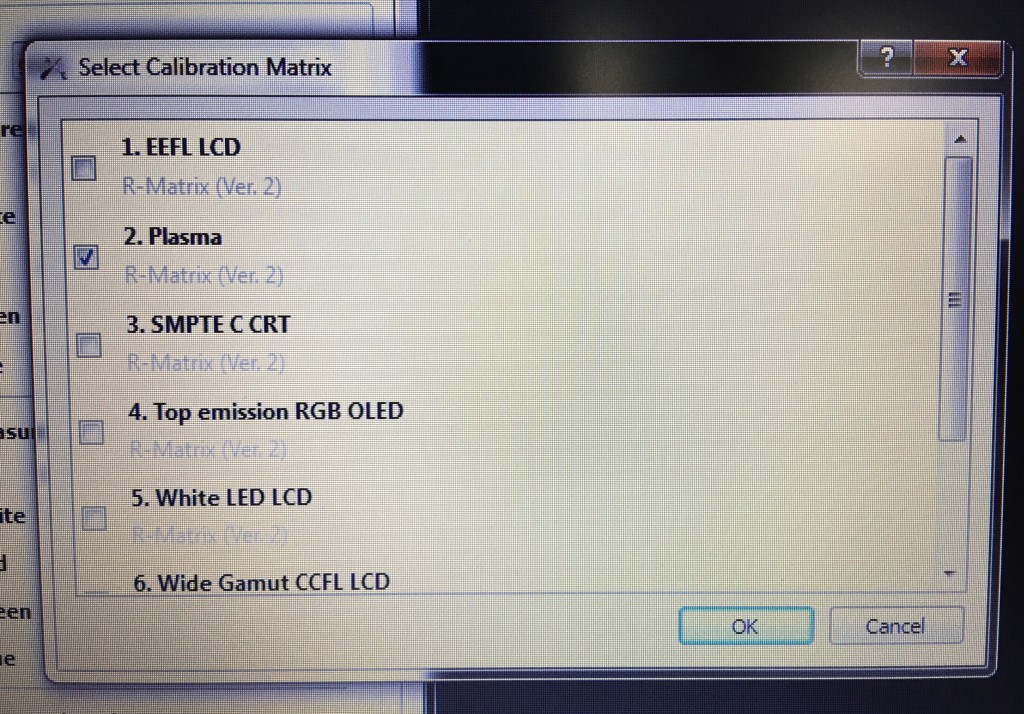

However, because Colorimeters are using fixed filters based on CIE 1931, they must be supplied with specific information about the spectral distribution of the particular type of light they’re measuring, as different displays use completely different types of light sources to emit an image. Otherwise, they’ll give inaccurate results. This means that you need to store different profiles on the Colorimeter (which is typical) for Plasma, Fluorescent-backlit LCD, White-LED-backlit LCD, OLED, etcetera. Usually, Colorimeters store generic profiles on the probe itself (which are available via pop-up menus in the calibration software you’re using), for use in measuring each display you have, and typically this works fine.

However, depending on the quality of your display and the accuracy and age of its backlight, it’s possible that the backlight of your display may diverge from the optimism of the generic profile on your probe, in which case the resulting measurements may be a little off.

So, the basic choices are between a Spectroradiometer that will be totally accurate for any device, but will take a really, really long time to do a full 17 x 17 x 17 sampling of the RGB color cube to profile your display (that’s 4,913 color patches), or a Colorimeter which will do that same 4,913 color patch calibration in an hour, but that might be a tiny bit off if there’s something obscure that’s wrong with your display.

I’m not trying to scare you. To put this into perspective, many companies get great results when using a calibrated Colorimeter’s generic presets to measure a high-quality display device. This is yet another reason to not try and use a cheap television or computer display, since displays that are designed to be color-critical also happen to be easier to calibrate.

However, if you demand total accuracy and total efficiency in any situation, there is another path, and that is to use a Spectroradiometer in addition to a Colorimeter in what calibration applications refer to as offset mode. Both LightSpace and CalMan can do this, and it involves using the Spectroradiometer to take four readings from your monitor, Red, Green, Blue, and White. Those readings are then used to calculate an offset for the Colorimeter’s measurements, so that the Colorimeter’s 4,913 readings are totally accurate for that display at that moment in time.

So, if you were wondering why high-quality color probes are so expensive, this glimpse behind the curtain of the technologies involved hopefully provides some, ahem, illumination. Although I would be remiss were I not to point out that prices are lower than they’ve ever been, what with Colorimetry Research’s CR-250 Spectroradiometer going for $6,990, and their CR-100 Colorimeter going for $4,990 (prices taken from Flanders Scientific). Furthermore, there are many other vendors to consider, including Klein Instruments, Photo Research, Konica Minolta, and Xrite, to name the ones with which I’m familiar.

And hopefully this has clarified the concrete differences between the two kinds of probes, giving you some background for further research in the process of trying to figure out which will be more useful for your application.

Typically, the easy answer is usually the most expensive one. Buy one of each.

4 comments

Thanks for this, I’d wondered (idly) how the calibration chain worked. Very interesting.

Thorough and understandable — more or less — look at an impossibly technical topic. Alex, I don’t know where you find the time, but many thanks!

Oh, and did you shell out the 10 thousand clams for all that kit?

Not yet. Still juggling financial priorities.

Great descriptions on colorimeters and photoradiometers. I have learned a lot from this article. I have been learning the visible light spectrum stuff and found this very useful.