Lest you later accuse me of false advertising, I’m admitting right now that I’m not going to tell you what you should buy. Rather, I’ll deliver an epistemological monologue about choosing monitors that may accidentally be helpful to you. I welcome comment, especially from those of you who are genuine color scientists. Just please be nice to each other.

I’m talking color critical displays, and gird your loins, because this post is a long one.

Before We Begin

Okay. I lied. I’ll do you a favor and give you a recommendation that will free you from needing to read the rest of this article. Pull together $30,000 and buy a Dolby PRM-4200. It’s big (42″), it has nice deep blacks because of its insane backlighting technology, it’s got stable color, excellent shadow detail, multi-standard support, contains the full gamut of both Rec 709 and P3 for DCI work, and it’s a giant piece of equipment that will impress everyone who comes into your suite.

Also, the European Broadcast Union (EBU) have declared that the Dolby is replacing CRT as the new standard reference monitor for their compression codec testing (May 2012, Post Magazine). If it’s good enough for the European Union, it’s good enough for me, and nobody is going to complain about working with one of these. No, I haven’t tested it. Yes, I’ve seen it in a controlled environment, and based on unscientific observation it looked fantastic. I’m electing to trust that Dolby and the EBU have done their jobs, so you can buy yourself out of this whole debate. I’d get one if I could.

On the other hand, if like me you don’t have $30K to spend on a display, read on.

Plusses and Minuses

My friends, we are making ourselves crazy. To a certain extent, this is necessary. We are professional colorists, and we require excellence in our display technologies. However, in the pursuit of excellence, we have been set to the task of achieving a pinnacle of perfection, while being given imperfect tools.

First off, I am not a color scientist. I am a pro user, but when it comes to display technology, I rely on expert information from a variety of sources. At the end of the day, I never recommend any display I haven’t personally seen, but I don’t claim to have rigorously tested the calibration profiles of different displays, other than my own.

What I usually do is what any educated consumer does: poll experts I know, check with company representatives when possible, and lurk the TIG and other online forums like crazy, collating every specific opinion I read from other colorists in the field about what they see, what they like, and why.

It seems to me that shopping for a color critical display is similar to being an audiophile—you can make yourself crazy searching for the Nth degree of perfection. Unlike audio technology, displays are subject to a concrete standard; Rec 601, Rec 709, or P3 dictating the gamut, and a gamma setting that depends on your specific application (more on that here), and a specific peak brightness measured in foot-lamberts (more on that here).

However, where once there was a single display technology, CRT, that served the entire industry, now there are legion. LCD with LED backlighting, LCD with fluorescent backlighting, different models of Plasma, OLED panels, LCOS Projectors, and DPL Projectors all vie for the dollars of colorists furnishing their grading suites.

Furthermore, the actual light-emitting technology is just one aspect of a display. Then there is the hardware and software that transforms an HD-SDI signal into a streaming wall of photons within the appropriate gamut, at the appropriate gamma and foot-lamberts. And, for every single display in existance, this requires some manner of calibration.

Speaking as an end user, display calibration is a frustrating field to follow. The frustration is thus: you’re told to adhere to a standard, and theoretically that’s cut and dried. Here are the numbers, make the display match the numbers. In practice, getting your display to match those numbers is a pretty challenging task, and different probes and software do this differently, and the results have minor deviations from one another, and then everyone gets to quibble about whose delta-E is smaller. (Crudely put, delta-E is the measured difference between what your display is showing, and what is should be showing, during a controlled calibration procedure.)

Consider probes. I’ve had various conversations with different folks, and read many articles, and the general consensus is that (a) hugely expensive probes are accurate, (b) even with expensive probes, different folks have different favorites, and (c) below a certain threshold ($10K) different probes are good at measuring different ranges of tonality. So, as with all things, if you’re not spending a shed-load of money, then virtually any decision you make is a compromise of some sort, and you won’t get perfection. You’ll get something somewhere close.

Now, consider calibration software, whether stand-alone (a package such as LightSpace, CineSpace, or Truelight), or built into a display like the Flanders Scientific or TVLogic at the low end, or the Dolby PRM-4200 at the high end. Each of these vendors will tout the advantages of their system, and the excellence of their color scientists, in crafting the ideal algorithms for mastering the heavy math of measuring and transforming accurate color. They all employ smart folks, so how do you choose?

And we haven’t even gotten to the displays themselves. Again, in the absence of a single technology that everyone in our industry can agree on, we’re stuck comparing different trade-offs. Here’s what I perceive at the moment:

- LCD (fluroescent backlight)—Plusses: Inexpensive (relatively), stable color, wide choice of vendors offering different sizes; Minuses: narrower viewing angles than Plasma, a black level that’s comparatively light (how this is perceived depends on viewing conditions)

- OLED—Plusses: Deep black level, stable color, appealing image quality; Minuses: even narrower viewing angles than LCD, 24″ is the largest realistically available as of this writing, reports of perceptual “magenta tinge” with older observers is worrysome

- Plasma—Plusses: Wide viewing angle, inexpensive at large sizes (55″+), deep black level; Minuses: less stable color requires more frequent calibration than other technologies (still better than CRT), slight crushing of data in the very darkest shadows, Auto Brightness Level (ABL) circuit modifies images with brightness above a certain threshold and is worrisome

- Projection—Plusses: Wide viewing angle, huge viewing angle, stable color, unique image quality matching the theatrical experience, “budget” models from JVC are inexpensive relative to size; Minuses: unique image quality matching the theatrical experience (it will never pop like a self-illuminated display), DCP-compliant high-resolution models are expensive and require infrastructure (cooling, a booth, etc), you need more space than a simple display.

So there you go. No one relatively affordable display technology has everything you want. Period.

What does this mean? Are we all screwed? Do we lose sleep because, whatever decision we made, we’re wrong and the programs we’re grading are all catastrophically off by some obvious margin?

No. Of course not. Across the world, there are hundreds upon hundreds of grading suites and thousands of multi-purpose video postproduction suites that are using what have been represented as calibarated, color critical versions of every technology I’ve just described. And they’re all producing hundreds of thousands of hours of programming and entertainment, much of which you probably watch on cable, at film festivals, in theaters, on your computer screens, and on your portable devices.

In my case, I’m using Plasma, my trusty VT30 series Panasonic display. Yes, it has the ABL circuit, and on Steve Shaw’s advice I’ve taken special care to adjust the calibration patch size to avoid its effects when I use LightSpace to generate LUTs for it. Is this circuit kicking in during some portion of whatever program I grade? Probably, but to be honest I’ve never noticed an artifact during a session, it’s never impeded my decision making, and I’ve never had a client come back with a program I’ve delivered and say “what the hell did you give me, hurkman!” And yes, I realize I’m missing some values of detail down at the bottom blacks, but again it’s nothing that’s stopped me from making creative decisions, and I’ve got a set of HD scopes to show me the data of my signal to make sure I know what’s there for QC purposes. I also have a CRT that I baby, but it’s got the phosphors, so it’s not really a match, though it’s close.

When I decided to go plasma, and I can’t stress enough that this is a personal decision that should be based as much on your particular clientele and needs as on your budget, it was based on the following: I like the blacks of plasma, I like having a larger display so my clients can comfortably sit behind me, and I like the viewing angle so that several clients can sit off axis (even in my home suite, it happens).

What’s Everybody Else Using?

My decision was also based on a certain critical mass that I perceived—as of 2010 I was aware of many high-end post houses that installed plasma in their grading suites as hero monitors, and I decided if it was good enough for them, then it’d be good enough for me.

And at the end of the day, I believe that’s a fair call. I want to trust my display, and I want my clients to trust my display. If I’ve got a technology that’s deployed elsewhere, that provides consistency and some confidence for all involved with the process. That was the advantage of the old Sony CRTs; everybody had one, so nobody had any doubt. It’s the same reason you see Genelec speakers in every editing suite you’ve ever been in. Sure, they’re great speakers, but it helps that no client is going to walk into a room and say, “what the hell kind of speakers are you using here, anyway?”

Time and technology march on. When I was in New York, my clients were a bunch of indie filmmakers, and I used a calibrated JVC DILA projector to give them the theatrical experience. Now I’ve got a Plasma I’ve been using for a year and a half, as I have a smaller room and am doing more commercial/broadcast types of work. If I were to get something new today, I’m not sure what I’d get; maybe another Plasma, or possibly an LCD-based display to save a little space in my current independent suite, it’s hard to say.

We Need A Tolerance

I’m going to go out on a limb and say that I believe our industry desparately needs a SMPTE-recommended tolerance for Rec 709 displays. In other words, yes we know what the standard is for HD video, but how far can we acceptably deviate from this standard before the warning buzzer goes off and we end up getting dunked in the clown pool.

And no, I’m not completely insane. There is precedent in the specified tolerance for DCI-compliant reference projectors; go out and find SMPTE Recommended Practice document 431.2 (this is also discussed in Glenn Kennel’s excellent Color and Mastering for Digital Cinema).

There are actually two projector tolerances given, a more conservative tolerance for review rooms (that’s you), and a slightly more liberal tolerance for theaters (the audience). For review rooms, Gamma is allowed to be ±2%, and color accuracy is allowed to be ±4 delta E*. I imagine this is to accomodate some slight aging of the projector bulb, but the point is if you’re within this tolerance, you’re good.

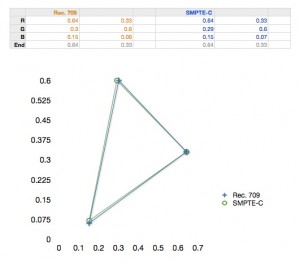

Absolute perfection, for we independent post folks, is cost prohibitive. I would also argue that it’s demonstrably unnecessary for professional work. I say demonstrably because Sony CRT monitors are still widely in use in high-end color grading suites across the world, and these CRTs are not set up to display Rec 709 primaries. They use SMPTE-C phosphors, which have different primaries, so the resulting gamuts closely align but do not perfectly match. Most folks will tell you that the reds appear subtly different when compared. Here’s a plot of the tri-stimulus primary values of Rec. 709 and SMPTE-C, compared.

However, they’re really close, and the truth is, if you review a program on a CRT, then output it to tape, get in your car, drive across town, get a sandwich, drive the rest of the way to the other post house, load the tape, sit in a different suite with a Rec 709 display, and watch the program, you probably won’t notice any difference, because the variation is only really going to be apparent if the two displays were sitting side by side, and we humans don’t have particularly good scene-specific color memory, and besides all color is relative to the other colors in the scene, and likely the interior of the gamut is going to be more consistent, so hooray.

Why am I bringing this up? Because if suites at the highest levels of our industry continue to employ display technologies with demonstrable variation from the Rec 709 standard, in pipelines involving other displays that are more closely adherent to the Rec 709 standard, then that means there’s already an accepable tolerance being informally used. It’s just not being admitted to or documented.

I’m not saying we shouldn’t strive for accuracy. I’m simply suggesting that what tolerance or margin for error is pragmatically acceptable needs to be more closely considered, ratified, and documented, so that we can all stop debating endlessly about what we should buy. Instead, a given display is either within tolerance or not, and there’s enough wiggle room for realistically minimum perceived differences between different technologies.

At that point, if a display or display type is outside the tolerance, then it’s easily discarded. And if it’s within tolerance, then you’re good to go, free of fear. The very thought of this lowers my blood pressure considerably.

Ps.

By the way, if there’s already an acceptable tolerance for reference Rec 709 displays and I just don’t know about it, please enlighten me. I would love for this to be something that’s already defined.

12 comments

I’m not sure which monitor to get. I’m hesitating between a 25″ Sony OLED PVM2541 and a 55″ Panasonic VT50. I don’t really want a big monitor but the price of OLED aren’t cheap.

What would you choose based on the best picture quality and color fidelity?

As I mentioned, I’m not making specific recommendations, and I don’t have direct experience with either of those displays. I hear good things about the Sonly OLED and VT50 series both, but I also know and understand the issues with both displays that I’ve outlined. I’ve only used an OLED (the BVM model) for one day; it was a brilliant display, but I did notice the limited viewing angle. Otherwise, I liked it, but I understand that the PVM has different tolerances then the BVM series, so I don’t know how representative my experiences were. Really, which display would suit your needs depends on your application, clientele, and budget.

Richard Kirk, Color Scientist at FilmLight Ltd., sent me this response, which he allowed me to repost here:

I notice you went with the JVC DILA for projection; do you remember what model number you used?

Is there a reason you went with that over something like the Samsung A800?

In the end, did you feel like DILA gave you what you needed and hit Rec. 709?

I had the JVC RS2. After researching all of the sub-$15,000 projectors then on the market, getting recommendations from the calibrator I was then using on the east coast, and checking out projector output in person, I decided on the JVC because of three reasons, (a) the DILA technology results in stunning blacks without the need for automatic iris nonsense, (b) my calibrator recommended an outboard calibration workflow (using a Lumagen box) that worked well for accurate Rec 709 color, and (c) several other post houses of my acquaintance were also using either that unit, or the earlier RS2.

JVC’s high-end home theater projectors continue to have a great reputation, and I think are still the best choice for non-2K theatrical-style monitoring in smaller theaters that have no room for a dedicated projector book and accompanying infrastructure. At the time, in NYC, I was doing quite a bit of indie film grading for projects going to the festival circuit, and the projected image has a distinctly different vibe then one from a self-illuminated screen, mainly because the image is reflected, and the white level is significantly lower.

I really only recommend projection monitoring for projects needing theatrical distribution, but then it’s fantastic to be able to work in context to the audience’s experience. However, if you’re working on broadcast or commercial spots, I think having a self-illuminated display in turn provides more insight into what the living-room audience will likely experience.

Hi, your book is excellent it helped me so much with grading. Just found out who wrote this article haha! Thanks!

[…] have a spare $30k? Then definitely read the rest of Alexis’ post on how to choose a color grading monitor. It is a detailed write up on understanding the different sorts of grading monitors available and […]

Thanks for the great article!

“By the way, if there’s already an acceptable tolerance for reference Rec 709 displays and I just don’t know about it, please enlighten me. I would love for this to be something that’s already defined.”

I don’t know how much this will help you, but the EBU has monitor grading specifications which clearly define tolerances for most parameters associated with SMPTE C and Rec. 709. Probably since Rec. 709 is a consumer-type deal anyway, they might have decided it is not worth updating.

By the way, I’m planning on getting a Panasonic Plasma as reference, but I’m also open to 24″ OLEDs. Just a simple editing suite, nothing fancy. Any suggestions? Is it cheaper?

Hi, I dredged this article up via google but I had a question regarding your use of a CRT. I have a small suite set up with a JVC CRT with HD-SDI card mostly for grading stuff that goes to broadcast and web. I am wondering if it is advisable to go to an LCD or other newer technology monitor for a small set up like mine or if it wouldn’t really be worth the money.

The rule I’ve always heard is that CRTs have a useful lifespan to the colorist of 2-3 years when in regular use (of course “regular use” varies with one’s workload). Depending on how old your CRT is getting, that alone might make it worth going LCD or OLED. Furthermore, CRT phosphors aren’t actually precisely aligned with the primaries of the BT.709 standard that everyone currently tries to adhere to, so that’s another reason to upgrade. The current lineup of color critical LCD displays from companies like Flanders Scientific, TV Logic, and the other usual suspects are very good and extremely affordable by comparison to older tube-based critical displays, and Flanders just dropped the price of their OLED display, which is one of the newest and most exciting color critical technologies to become available (Sony being another vendor of this technology), so there are many options. If I were you, I’d probably upgrade. (I just put my CRT out to pasture two months ago, but I hadn’t turned it on for two years prior, so there you go.)

Hey,

great post! Choosing a color critical monitor is major decision and one thats need to be carefully researched.

Am curious as to what you think about color critical Desktop Monitor like the dreamcolor or the new NEC – PA242W-BK-SV 24″ Professional Wide Gamut LED Desktop Monitor.

Yes, they are computer monitors with the obvious lack of SDI inputs etc but budget and spec wise, it will seem ideal alternative to a production monitor due to features like 10bit panel, 3d LUT and ability to load specific profiles like rec709, DCI etc.

What do you think about that?

Also, it might seem a newbie question but what is the main drawback to grading with a color critical computer monitor like the NEC and dreamcolor as opposed to say the panasonic plasma or a flander scientific?

your response will be appreciated.

I don’t have any hands-on experience with these two displays, so I’m afraid I have no advice to give. The main drawback with using pro computer displays is a lack of adjustability for broadcast video applications. Pro video displays typically have adjustable gamma, adjustable colorimetry, and specific settings governing things like whether image data is read as full-range or studio-range. These kinds of explicit settings let you customize the display to your application, but more to the point they let you see exactly what it is your display is set to, which is important to having confidence in what you’re doing. Also, built-in HD-SDI inputs spares you any problems that an HD-SDI to HDMI adapter might introduce, if any. Basically, the fewer boxes you can have in your signal chain, the fewer potential problems you’ll need to contend with.