It’s time for a new Vectorscope graticule. The graticule, (sometimes called a reticle), is the overlay that presents targets, reference lines, crosshairs, and other guides to help when interpreting the trace or graph of a Vectorscope’s analysis. Older hardware-based Vectorscopes had the graticule silkscreened on a plastic overlay, so it was fixed and unchanging.

I’ve used several different hardware and software-based Vectorscopes over the years. Speaking as a colorist and not a broadcast engineer, they’re useful for comparing saturation levels between clips, for comparing the angle of hue of specific features appearing in multiple clips, for QC checking to make sure the signal is within tolerance, checking for overall errors in hue, and creatively they’re useful for evaluating how much color contrast you’ve got in your image, and in what direction the average color balance or dominant color temperature of the scene is leaning. Once you learn to read the graph of a Vectorscope, there’s a lot you can see.

Despite all this utility, the average HD vectorscope graticule in this day and age of graphically drawn software scopes shows nothing but boxes to indicate each of the target hues found in the 75% color bars test pattern, sometimes a second set of 100% bars boxes, usually a small (tiny) crosshairs to indicate the very center of 0% saturation, and maybe an In-phase indication line (or skin tone indicator line, depending on who named it). Other then that, you’re looking at a big black area with a blob of a graph at the center that shows you all the data.

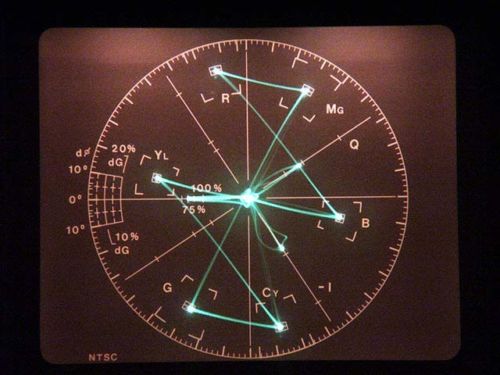

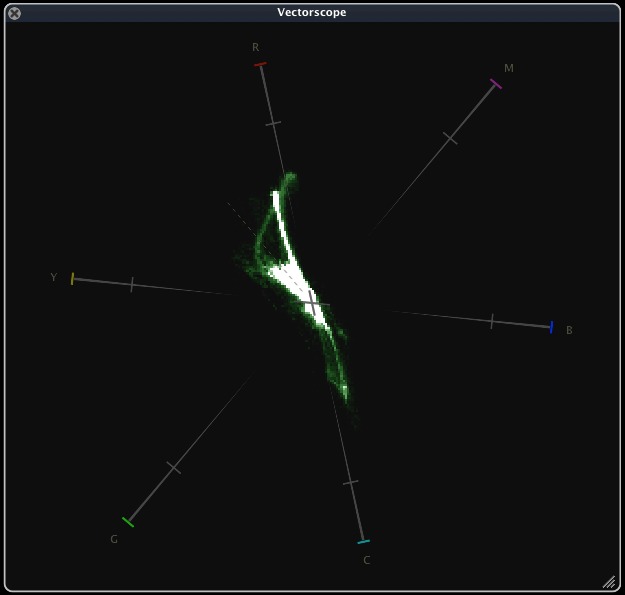

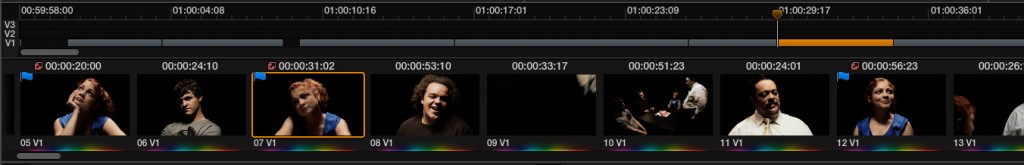

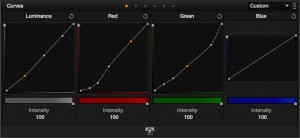

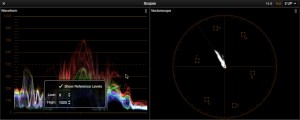

In the screenshot above, I chose the Final Cut Pro X Vectorscope as an example not to pick on it, but because it’s actually a nice implementation (especially now that they put the center crosshairs back in, which had disappeared during a previous update). However, it’s also an example of a brand new piece of 2013 software that’s implementing a vectorscope graticule that wouldn’t look out of place in the eighties.

More to the point, the following picture shows the graticule presented by my rather expensive Harris VTM 4100 vectorscope (when analyzing an HD signal). It’s got boxes to show the angle of each hue, it’s got crosshairs, but that’s pretty much it as far as any kind of scale goes.

I understand the idea of simplifying the visuals of the scope. Folks with software Vectorscopes are likely using them for creative and comparative analysis, rather then as tools for signal alignment. Furthermore, a lot of the lines and indications from the analog days just aren’t meaningful anymore when examining a digital signal. I’m sympathetic to the goal of freeing the colorist’s eye from the unnecessary clutter of legacy scopes.

However, what I find I’m missing within the sparse landscape of the modern vectorscope is some kind of a scale of hue to aid me in the process of signal comparison. While the little standard box targets do a fine job of suggesting the direction of each of the primary and secondary hues, I’ve long wished there was a more concrete guide showing the actual vectors of hue for reference at a variety of intensities of saturation. Given the little boxes distance from the traces of most shots of average saturation, one needs to essentially “eyeball” a given trace’s relative position to the angles of absolute red, or blue, or yellow.

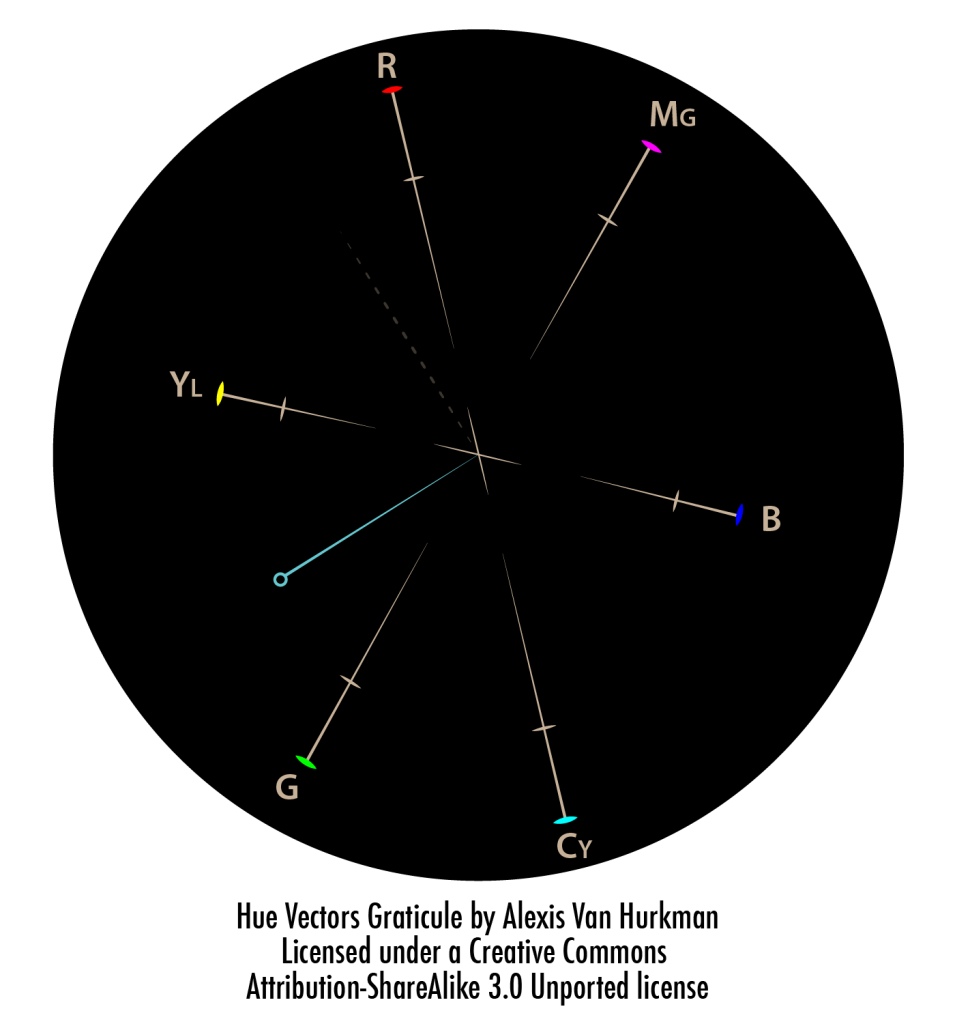

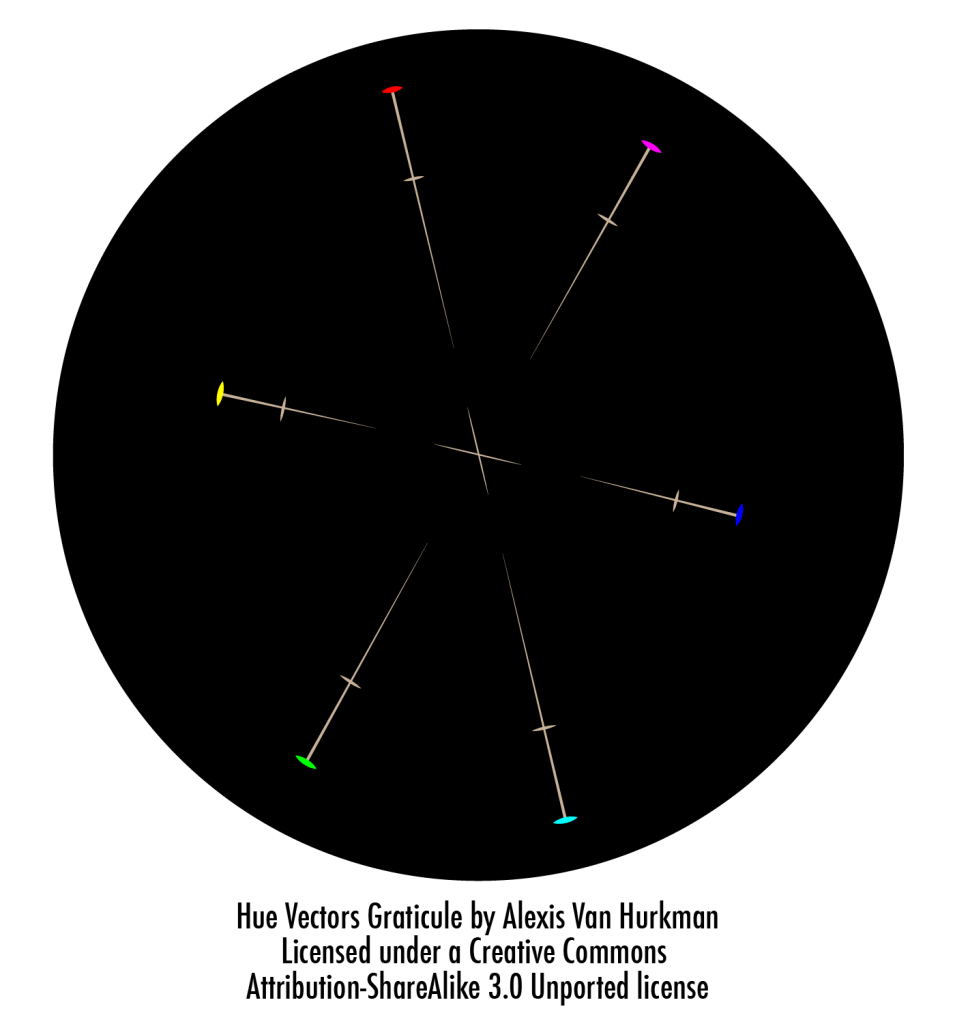

So I designed my own graticule.

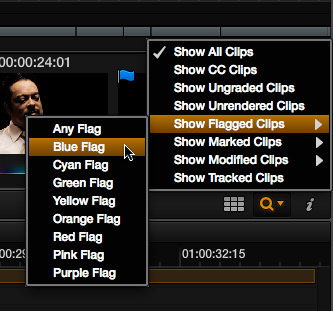

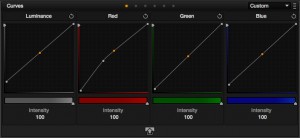

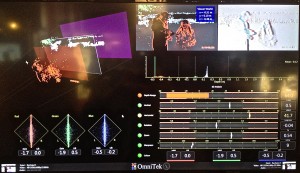

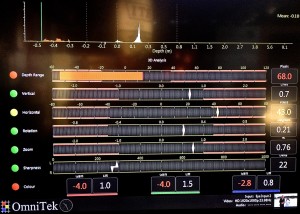

My design goal was to find a clean, uncluttered way of providing hue angle guidance at a variety of levels of saturation, while retaining the useful guideposts we’ve come to rely on from previous designs. The image up top presents all of the options in my current design, but a recommendation of this design is that there be the option to turn off the dotted skin tone indicator and the user-adjustable reference line. The simplified result appears below. Overall, I’m trying to present a more visible scale of the crucial angles of hue, while still keeping the graticule simple and immediately comprehensible.

Here is an explanation of the features of this design.

- 75% intensity tic marks, that correspond to the same angles and center-points of the hue boxes in traditional Vectorscopes. The intersection of the inner tic marks and hue vector lines show the dead center of each hue at 75% intensity. The outer tic marks indicate the boundary of 100% intensity.

- Long lines that indicate the vectors of each of the primary and secondary hues, stretching from 100% intensity to 22.5%. These lines thin and fall off towards the inner 22.5% boundary, leaving the center of the vectorscope clear to provide an uncluttered view of the subtle traces that describe the most common levels of saturation in most average images, while the pointed tips still provide a useful reference of each hue when evaluating these smaller traces. The objective of these long hue lines is to provide angular reference indicators that are more easily seen and remembered when comparing the traces of differently shaped graphs for multiple images. Furthermore, these long lines all point towards the critical center of the display, providing clear visual guidance without the need for full vertical and horizontal crosshairs.

- A center crosshairs that indicates the crucial 0% saturated center of the graph, oriented along the Red/Cyan and Yellow/Blue axes. This orientation provides a clear warm/cool visual reference that will be useful for beginners, and for tired professionals and their clients at 4am. The crosshairs should be big enough to be seen clearly, but small enough not to impede the detail found in smaller traces.

- An optional dotted line indicating the traditional In-phase/Skin Tone vector. Dotted to reinforce the idea that this indicator is a reference, and not a rule.

- A user adjustable vector reference that can be positioned at any angle and percentage. If you’re needing to match a product color precisely from shot to shot to shot, a user-adjustable indicator that can be put right where you need the trace to be is exceptionally handy.

- My original design presented simple letters to indicate the hue of each vector, but Mike Woodworth suggested color-coding the outer 100% tic marks as well to provide a more immediately identifiable UI. The colors shown in the above PNG are exaggerated for clarity; with darker colors, I don’t find the colored tics distracting, but this can always be adjusted along with the brightness of the rest of the graticule. How customizable to make these sorts of display issues (turn off colors separately from letters?) is an implementation choice.

When I first worked this design up, I was of course concerned that it would never see the light of day. I know how busy developers are, and I was afraid that this would end up being a very low priority.

However, when I mentioned what I was doing to Mike Woodworth, developer of Scopebox, he was genuinely interested. After some conversation, I decided to offer Mike the design free for inclusion into Scopebox first, with the understanding that upon its release, I would immediately publish the design here on my blog under a creative commons license, so that any developer or company who wants to incorporate this graticule into their product (even commercial products) is free to do so. I want to remove any barrier to adding this to a Vectorscope implementation, but I also want to make sure that anyone can include it. Really, I just want to be able to actually use it in real products.

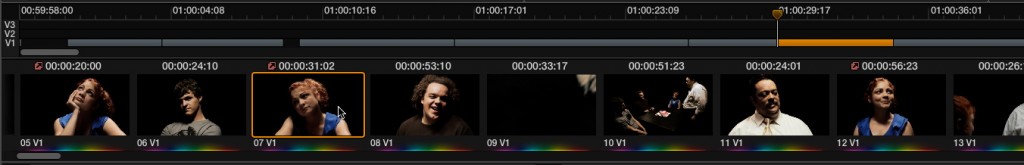

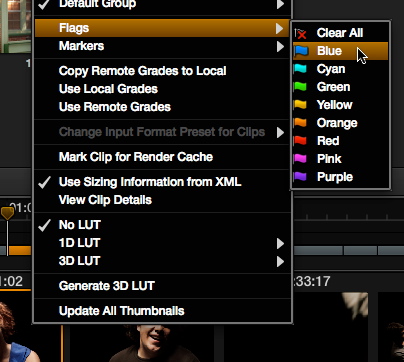

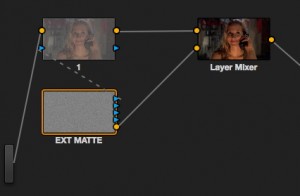

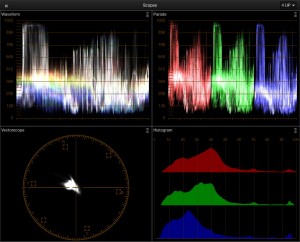

Concurrent with this article’s publication, Mike is releasing Scopebox 3.2, which offers this graticule as an option with all the features described here (choose Hue Vectors from the Grat Style pop-up menu) alongside the previously available graticule. I’ve been running a variety of clips through it, and have used it very lightly for a scene or two of grading, and can honestly say that I find it useful.

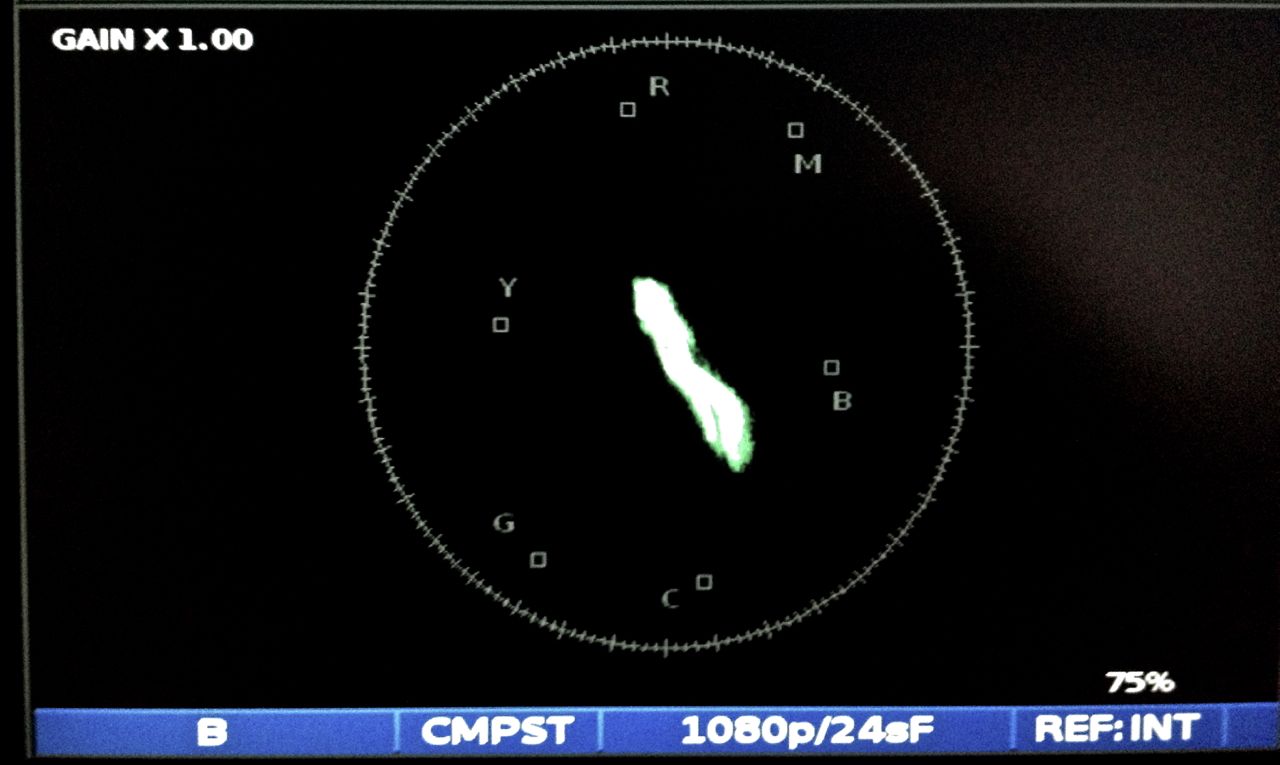

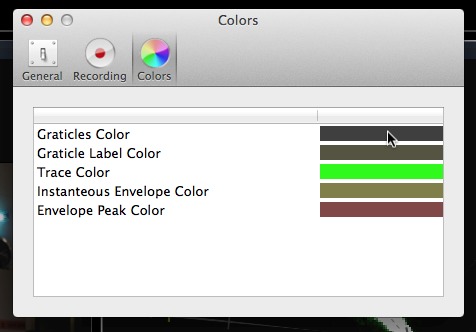

I’ll share one observation about the Scopebox implementation. I find I like to open the ScopeBox preferences and darken the default color of the “Graticles Color” to obtain a nice, subtle graticule that is lightly visible without calling too much attention to itself (as you can see in the image above). With a darker graticule color, I find the Grat Intensity slider moves along a more reasonable scale of light to dark for my taste. The magic of user preferences is that you can set this up as you like.

If you’re a ScopeBox user, give this option a whirl and by all means let me know what you think. I invite comment.

And if you’re a software developer or manufacturer of video scope software or hardware and this looks interesting to you, please download the PNG files in this article as a starting point, or give me a call. I mean what I say, this is a creative commons licensed design, and I’ll be happy for you to incorporate it into your product. I want to see it used, and I want it to be available to anyone who wants to implement it, free of the briar patch of patent restriction. This is my first foray into trying to make a design available in this way, so here are the provisos, courtesy of creativecommons.org:

Attribution — You must attribute the work in the manner specified by the author or licensor (but not in any way that suggests that they endorse you or your use of the work). A simple mention in the attribution front-matter of your documentation is fine; “Hue Vectors graticule designed by Alexis Van Hurkman.”

Share Alike — If you alter, transform, or build upon this work, you may distribute the resulting work only under the same or similar license to this one. I would like improvements to this design to ripple out to the wider community. This applies only to the Graticule design, not to your entire product. Just as existing graticule designs aren’t copyrighted, I don’t want useful alterations to become themselves restricted.

Waiver — Any of the above conditions can be waived if you get permission from me. Click the contact page and drop me some mail, or give me a call if you’ve already got my number. I’m always happy to chat.

And finally, here’s a link to all the creative commons legalese.

Hue vectors graticule by Alexis Van Hurkman is licensed under a Creative Commons Attribution-ShareAlike 3.0 Unported License.

Permissions beyond the scope of this license may be available at http://vanhurkman.com/wordpress/?p=2563.